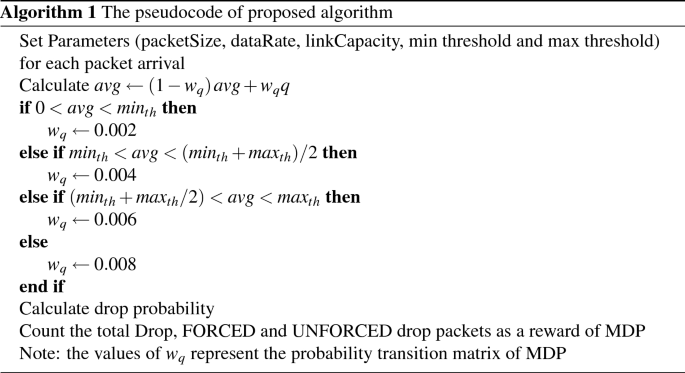

The AQM algorithm

The default of AQM algorithm work is represent in RED algorithm. The RED Inception of the congestion depends on the average queue size (avg), two thresholds ((min_{th}) and (max_{th})) and maximum drop probability ((max_p)). Each packet arrives at the router queue; the algorithm calculates the avg by using exponential weight moving average (EWMA) as a low pass filter, which is shown in Eq. (1) as follows:

$$begin{aligned} avg = left( 1-w_qright) avg+w_qq end{aligned}$$

(1)

where (w_q) is the queue weight and q is the current queue size. If the avg value is (min_{th}

$$begin{aligned} P= max_p(avg-min_{th})/(max_{th}+min_{th}) end{aligned}$$

(two)

The RED algorithm starts dropping all incoming packets when avg exceeds (max_th) to manage the congestion in the queue. The drawbacks of RED have a slow response to congestion and a difficulty tuning the parameter. Thus, these drawbacks make the algorithm work incorrectly when different applications and services use different data rates. The GRED has three values of thresholds ((min_th),(max_th) and double (max_th)) to reduce drop probability slop curve. The proposed algorithm used three thresholds as in GRED and the dynamic value of (w_q) selected based on the Markov process.

Markov Process

The MDP depends on the combination of ((s_t), (a_t), (r_t)t)17 with transitional probability to determine which action needs to be taken for a given state, which can be seen in Eq. (3) as follows:

$$begin{aligned} P(s_{t+1}vert s_t,a)=P(s_{t+1}=jvert s_t=i,a=k) end{aligned}$$

(3)

where (s_t) and (s_{t+1}) indicate the present state and the next state, respectively, while a indicates that an action needs to be taken. Yo and j can be 1,2,3, … that represent states, where j is the next state and Yo is the current state; and k can be 1,2,3, … to indicate which action is taken.

(r_t) is the reward (return) from the environment to the agent after an action is applied to the current state, as shown in Eq. (4), and this reward can be the maximum or minimum. In this work, the reward represents a minimum number of packets dropped as follows:

$$begin{aligned} R_t=sumi=t}^{infty } r_{i}=r_t+r_{t+1}+r_{t+2}+… end{aligned }$$

(4)

The MDP includes the discount factor (gamma ), which has a value between 0 and 1 to give a weight for future rewards. In this study, the value of (gamma )=0 includes only the immediate reward without involving future rewards, which is shown in Eq. (5) as follows:

$$begin{aligned} R_t=r_t+sum i=t+1}^{infty } gamma ^i r_i end{aligned}$$

(5)

To map MDP on the RED algorithm, there is a need to consider the average queue length, avg, as a state and the type of drop packets as an action. We assume four sets of states S=(s_1, s_2, s_3, s_4). Then we have the (4times 4) transition probability matrix shown in Eq. (6). (s_1) means the avg state TCP for a slow start below a minimum threshold ((0< avg < min_{th})), (s_2) indicates the avg state between (min_{th}) thresholds and halfway of the two thresholds ((min_{th}< avg < (min_{th} +max_{th})/2), s_3) indicates the state when the avg is between halfway of the two thresholds and below the (max_{th} ((min_{th} + max_{th})/2< avg < max_{th}))and (s_4) indicates the state when the avg is greater than (max_{th}) and less than double (max_{th}) ((max_th

$$begin{aligned} P_{ij} = begin{bmatrix} P_{00} &{} P_{01} &{} P_{02} &{} P_{03} \ P_{10} &{ } P_{11} &{} P_{12} &{} P_{13} \ P_{20} &{} P_{21} &{} P_{22} &{} P_{23} \ P_{ 30} &{} P_{31} &{} P_{32} &{} P_{33} \ end{bmatrix} end{aligned}$$

(6)

Design and methodology

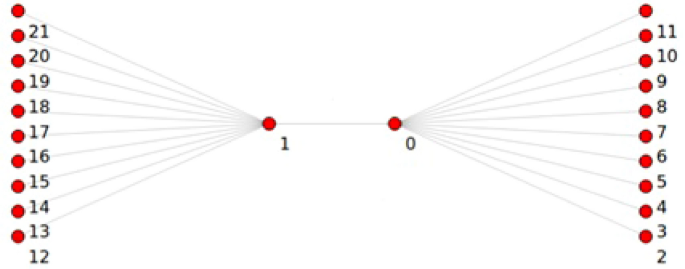

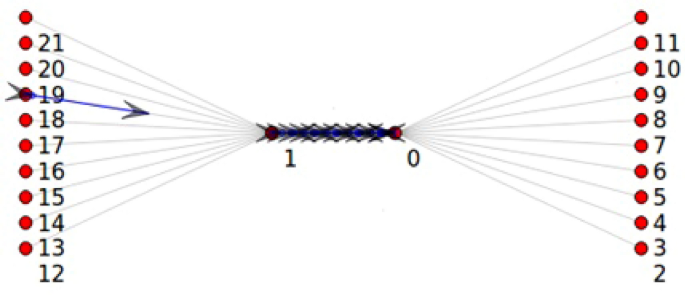

The Point-To-Point Dumbbell network topology built by NS3 with ON-OFF application was used in this study, as shown in Fig. 1. In the current study, the network has five nodes on each left and right side at beginning then increasing by 5 nodes in each simulation round up to 200 nodes, the source and the destination, respectively, and the two nodes in the middle that reflect the router in the core network create a bottleneck. Figure 1 shows that the sending nodes have not sent any packet, while in Fig. 2, the bottleneck link has been congested, and the drop packets procedure started.

NS3 Point-To-Point Dumbbell topology before starting simulation.

NS3 Point-To-Point Dumbbell topology after 10 sec of run time.

TCP slow start

The TCP protocol has a default congestion control with four phases: slow start, congestion avoidance, fast retransmit, and fast recovery18. The slow start phase allows the TCP to inform the capacity of links in the transition path; this occurs by duplicating the window size per RTT of each acknowledgment received. If no acknowledgment is received, the TCP indicates that congestion occurs, and the congestion avoidance phase starts; then, the window size increases by one for all successful acknowledgments. Therefore, the TCP slow starts increasing exponentially in each RTT, which means that congestion can occur within a short time.

Tuning algorithm parameters

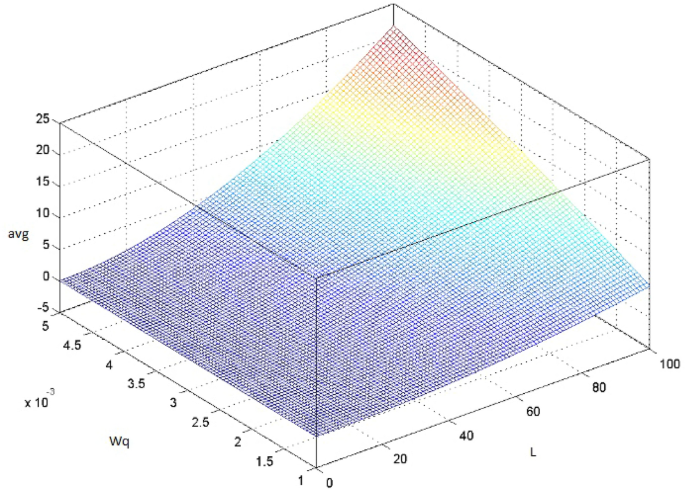

The default values in the RED algorithm parameters are (w_q)=0.002, (min_{th})=5, (max_{th})=15, (max_p)=1/50two. In the present study, the probability transition matrix (P_{ij}) in Eq. (6) is calculated based on queue weight (w_q). Equation (7) shows the relation between the load rate and (w_q)as well as their effect on the avg valuetwo as follows:

$$begin{aligned} avg=L+1+frac{(1-w_q)^{(L+1)}-1}{w_q} end{aligned}$$

(7)

where L represents the load rate of the sending packets. (w_q) works as a time constant in a low pass filter on the average queue value, which reflects the response time and queue weight of incoming packets, as shown in Fig. 3. Therefore, if (w_q) is too large, then the algorithm does not filter out the congestion that appeared in a short time, especially in the slow start of the TCP protocol, as mentioned in the previous subsection. If (w_q) is too small, then the algorithm response to congestion is too slow to reflect the change in queue size, and the small value of the queue weight is suitable for the slow start phase in the TCP. In this study the initial value of (w_q) set to zero and increment by 0.002 when the state of avg change to other state, these values selected to manage the exponential increase of TCP Slow Startup flow. Table 1 shows the range of the (w_q) used in the suggested algorithm.

The effect of the avg as a function of (w_q) and the load rate.

By using the values of the (w_q), the MDP probability transition matrix is obtained, shown in Eq. (6) as follows:

$$begin{aligned} P_{ij} = begin{bmatrix} 0 &{} 0.002 &{} 0.002 &{} 0.002 \ 0.004 &{} 0 &{} 0.004 &{} 0.004 \ 0.006 &{ } 0.006 &{} 0 &{} 0.006 \ 0.008 &{} 0.008 &{} 0.008 &{} 0 \ end{bmatrix} end{aligned}$$

(8)

The diagonal matrix assigns a zero value, which means there is no change in the queue weight value if the state is the same. The parameters of the implemented network are shown in Table 2: